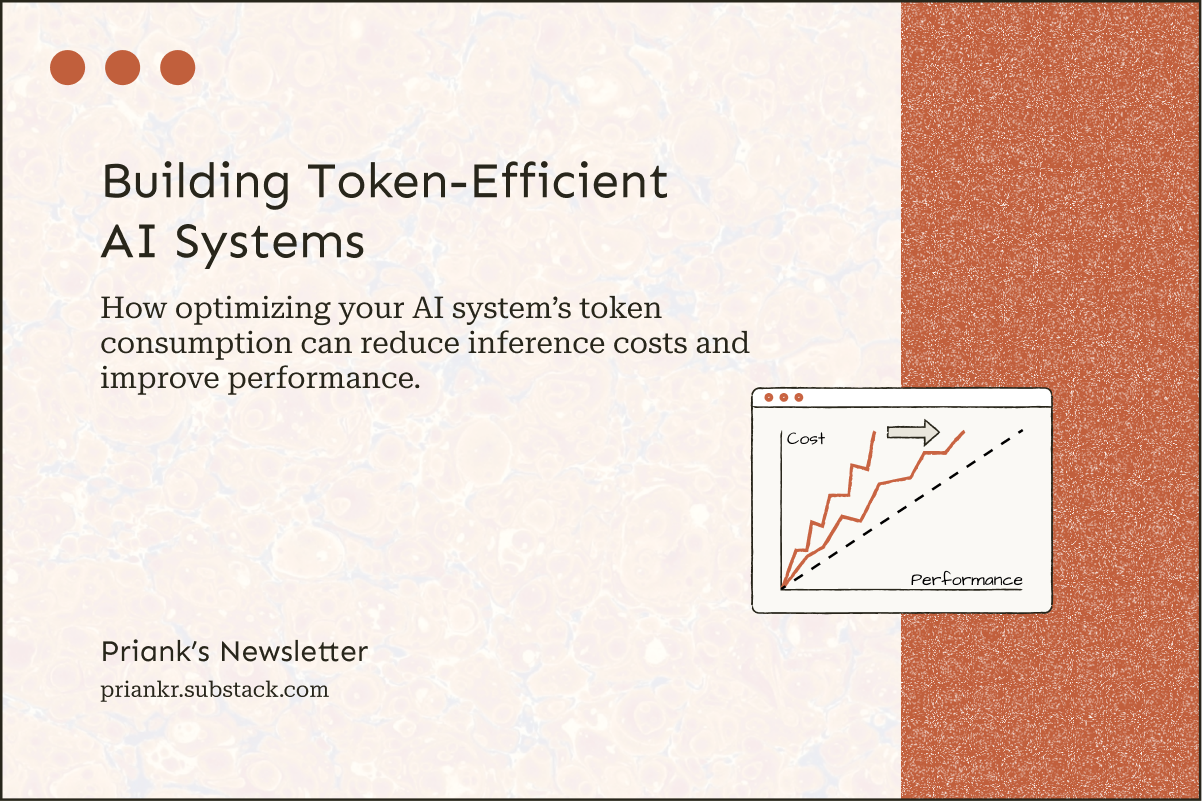

Building Token-Efficient AI Systems

How optimizing your AI system’s token consumption can reduce inference costs and improve performance.

Over the past few years, there’s been an immense focus on getting better outputs from AI systems, since these improvements make solutions more useful and valuable. However, the cost of consistently delivering good results is now becoming a major concern. Higher spending doesn’t always produce higher performance, even as the models get more powerful.

AI systems need specific architectural constraints to balance cost and performance. The financial and computational cost of “good” results is unsustainable once we exceed certain thresholds. If we can’t make strategic tradeoffs when designing AI solutions, we’re simply building a product that costs more than it’s worth.

How Token Consumption Impacts Cost

Token consumption is now a measure of an AI system’s primary operating cost, also called the inference cost. The more tokens consumed, the higher the cost. However, not all tokens are the same. Input tokens represent information fed into the model, while output tokens represent information generated by it. Typically, output tokens cost 5-6x more than input tokens, since the model’s output is where the real value lies.

As the technology has improved and new implementation strategies have emerged, product builders have been able to build much more capable AI solutions. While this has led to considerable increases in revenue and even profitability in several cases, many people are noticing that spending is still generally trending upwards. So even if they are getting better results, they’re also paying much more for them.

While cost per token has actually gone down 10x, token consumption has increased more than 100x, indicating an over 10x increase in spending.

Example:

Then → $50/1M Tokens x 100M Tokens/Month = $5,000/Month

Now → $5/1M Tokens x 10B Tokens/Month = $50,000/Month

Why Token Consumption Has Increased Substantially

Rising global AI adoption has increased token consumption overall. While this is due to the increase in users, the tasks people are using AI for are also amplifying consumption. People are now using AI for much more complex tasks, which require greater token consumption, since these tasks require greater “reasoning” effort.

AI’s non-deterministic behavior has also been driving up costs in production. Often, during development, testing, and even after the initial release, token consumption may be within the expected limits. However, once usage starts increasing, token consumption starts becoming highly variable, sometimes significantly increasing inference costs and latency.

AI agents have substantially increased workloads far beyond expectations. Agents are rapidly becoming the primary way to capitalize on AI’s “intelligence.” However, they consume a significant number of tokens. They also waste tokens due to sub-optimal strategies (e.g., unnecessarily complex approaches) or reasoning failures (e.g., mistakes, hallucinations, loops), driving up consumption.

Tokenmaxxing is another recent trend increasing token consumption. People are now maximizing token spending driven by a belief that this will somehow maximize the potential “gains” from AI adoption. In reality, the actual value created still lags behind spending, but many companies are now still consuming billions and even trillions of tokens each month.

The Cost-Performance Tradeoff

The implementation that produces the best possible results may not be the most token-efficient. For example, a multi-agent system can generally handle a complex workflow more reliably than a single-agent system, since it can use specialized agents for each task. However, multi-agent systems will consume a lot more tokens, so you end up paying more for the increase in performance.

Performance also gains rarely scale proportionally with token consumption. A 10x increase in consumption won’t produce a 10x improvement in output quality. People can deploy certain token optimization tactics to balance costs and performance, but they have to accept that every tactic comes with trade-offs. Generally, building high-performance AI systems will almost always be expensive.

Why Token Optimization Is Now A Major Product Concern

AI harnesses have a significant impact on token consumption.

An AI system’s harness directly influences token consumption, since it determines what to store, retrieve, and show to the model at each step. Specific architectural changes within the harness to improve the system’s overall token efficiency without sacrificing performance. For example, choosing models based on task complexity, delivering limited, highly relevant context to the model, or directing the model towards task-specific tools.

Enhancing the token efficiency has a few key benefits:

Increased Cost-Efficiency: It can significantly reduce the system’s operating costs by lowering token spending.

Improved Computational Performance: It can reduce computational load, increasing response times, scalability, and even reliability.

Improved Task Performance: It can focus the model’s attention on the most relevant content, improving reasoning and results.

When building AI solutions, we have to ask ourselves how much we’re willing to spend for better performance. High-quality results are not useful if the underlying costs are fundamentally unsustainable. You can’t absorb these costs indefinitely. Without harness-level token optimization, you can get stuck with huge AI bills when either usage increases massively or the system starts operating in highly token-inefficient ways.

Cost-efficiency is becoming increasingly important when building AI solutions. You can’t keep prioritizing capability over cost long-term. There’s always a risk of heavy usage leading to spending far exceeding revenue. For example, Anthropic had to adjust subscription plan limits after discovering that some users on their $200 per month Max plan were using almost $5,000 worth of tokens. If this can happen to a leading AI provider with large engineering teams who presumably plan for such issues, it can easily happen to smaller teams and solo developers.

Tactics For Optimizing Token Consumption

Token optimization requires implementing multiple architectural constraints. No single tactic will produce a huge decrease in token consumption, but collectively they do make a difference.

Minimize The Context Provided To The Model

AI systems accumulate a lot of context across interactions that quickly becomes irrelevant. When the harness does not manage context effectively, context windows can rapidly fill up. This overwhelms the model with unnecessary details, derailing task execution. When the relevant context is kept as slim as possible, the model stays focused on the right details, which can both improve performance and reduce costs.

Another aspect of context management is memory management. Since context naturally grows with long-horizon tasks, optimized memory systems can ensure that relevant information is “remembered” and unnecessary details are “forgotten,” which is especially helpful when the system must handle complex tasks. This can optimize token consumption and improve output quality because it limits relevant context.

Direct The Models To The Right Tools

AI models need tools to apply their “intelligence.” While more tools make the system more capable, each additional tool increases the surface area for potential issues (e.g., reasoning failures, incorrect tool calls, bugs). When models use incorrect or irrelevant tools, they consume tokens and reduce performance efficiency. Since many tools also connect to paid services, this can increase spending on both tokens and external services as well.

The tool outputs can also be quite large (e.g., a full dataset, a lengthy report, a massive JSON response). So, using the wrong tools can both waste tokens and fill up the context window with irrelevant context. Therefore, the harness should expose only a selection of task-specific tools to the model. This increases the likelihood that the system will complete the task successfully while minimizing token consumption.

Route Tasks To The Right Models

Inference costs and performance can vary significantly based on the specific model used. We can optimize these by efficiently routing simple tasks to cheaper models and complex tasks to more expensive ones. Some models can also be more token-efficient on certain tasks. By routing tasks to models based on model capability, task complexity, and token costs, we can balance cost and performance, ensuring that we only use the most capable (and most expensive) models when necessary.

There are two main ways to implement routing:

Static routing involves assigning entire workflows to specific models. Typically, you implement an eval to measure and compare task performance with different models. Then you choose models based on your cost and performance thresholds. While this approach can offer decent cost savings, it’s less flexible since certain models always handle certain tasks (e.g., Task A → Model A, Task B → Model B). The model assignments also have to be updated as new models are released and older models are deprecated.

Dynamic routing involves using a routing model (the “router”) to assign tasks to different models in real time based on task complexity. While the cost savings with this approach can be significant, designing an effective router is challenging, since the model could assign tasks incorrectly, which could degrade the overall performance in multi-step workflows. Many routers use simple keywords or semantic logic to assign tasks (e.g., “summarize” text → simpler model, “analyze” text → complex model), which can produce mixed results.

Delegate Tasks To The Right Agents

In multi-agent systems, a main agent delegates each task to the different agents. These systems can consist of a single orchestrator agent with multiple subagents, or multiple orchestrator agents (each with its own subagents). A well-designed routing system can ensure that the orchestrator identifies relevant agents when reviewing the task requirements and passes it along to the right one, along with the relevant context. It ensures that the system utilizes specialized agents effectively.

Each agent has a different harness, equipping it with different capabilities (e.g., a curated selection of tools, a custom system prompt, different permissions, specific models). This means they can be optimized to handle certain tasks. Since they work with a smaller subset of context, they are less likely to focus on the wrong details. They also have fewer tools, so they are less likely to use the wrong ones. Since multiple agents can be run in parallel, tasks can also be completed faster, improving overall response times.

Cache Input Tokens And Reuse Them With Similar Requests

When we send a request to a model, the model converts this information into tokens. However, even when we send mostly similar requests, the model will default to generating new input tokens each time, which is highly inefficient. Instead of paying repeatedly for previously generated tokens, we can cache these tokens so we don’t have to recompute them. Since tokenization happens at the model level, we access cached tokens from the model provider, typically at a much cheaper rate compared to new input tokens.

Whenever a new request comes in, the system checks if there are existing tokens that could be reused. This can both reduce token costs and response times, since new tokens don’t have to be generated. Caching works best when the majority of requests are largely static (e.g., requests to do the same thing with minor variations) or when requests are semantically similar (e.g., requests to do the same thing phrased differently). The latter can be trickier to implement because deciding what counts as a similar request can be challenging.

Cache Output Tokens And Reuse Them In New Requests

AI models produce outputs as tokens and then convert these to the appropriate format. When a request references a previous output, the model will default to regenerating the previous tokenized output. Therefore, if we want to reuse the output in a future step without consuming additional tokens, we have to store the tokenized output, not just the final output. Fortunately, output tokens can be cached as well.

Agents can benefit significantly from caching outputs from previous tasks. This reduces the amount of new information that the agent needs to tokenize to complete its task. Workloads that frequently use the same information often see the biggest gains from caching. For example, if an agent needs to run the same analysis on historical data repeatedly, caching the results means we can feed cached tokens into the model instead of generating new ones, substantially reducing the costs of the workflow in some cases.

Put Limits On Generated Outputs

When the model has to “think” about how to execute a task, it’s useful to define clear limits on how hard the model should think and how big its output should be. We can set “thinking budgets“ to define this, so models don’t “overthink” on simpler tasks and waste tokens. Setting a thinking budget can ensure that the degree of “thinking” matches the task complexity. Most reasoning models have an explicit parameter to control this budget (e.g., thinking_budget on Gemini models, budget_tokens on Claude models).

We can also establish explicit constraints on outputs to give the model clear boundaries. For example, if the output is a text artifact, hard length limits in the prompts (e.g., generated reports → 10,000 characters max) can ensure the model doesn’t waste tokens on unnecessarily long responses. Another way to enforce this is to implement a stop sequence, which is a flag that forces the model to stop generation immediately (e.g., response character count = 10,000 → stop), but this has to be implemented carefully to avoid generating incomplete outputs.

Batch Requests When Possible

When AI systems have to perform longer, computationally intensive tasks (e.g., classifying data, generating embeddings), it’s often more cost-efficient to do these asynchronously. Model providers offer lower pricing on batch requests. For example, OpenAI’s Batch API processes asynchronous requests at 50% lower costs. Therefore, we can optimize token spending by batching requests for non-urgent work. If you don’t need the results immediately, you can take advantage of substantial cost savings with this approach, especially when dealing with token-intensive workflows.

Strategically Use Server-Side Computation

AI systems are often connected to multiple systems, tools, and MCP servers. We can use this functionality programmatically without relying on the model for many tasks. For example, if the system connects to a database, we can have the model trigger case-specific queries (sorting, filtering, or aggregating data as necessary) and then pass on relevant context to the model. While the model could just do this directly, server-side computations can potentially save hundreds or thousands of tokens per request. They can also slightly improve reliability, since we’re replacing a non-deterministic process with a deterministic one. At scale, these small optimizations could considerably improve cost-efficiency and performance.

Why Monitoring Token Consumption Is Useful

It can reveal opportunities to further optimize cost and performance.

The monthly AI bill gives you little insight into how token-intensive each task really is. Even workflow-level tracking only gives you a high-level picture. However, we can implement token-level cost tracking to get deeper insight into the system as a whole. We use AI observability solutions (e.g., LangSmith, Langfuse) to track the traces (end-to-end records) from every single model interaction. This can provide useful insights, such as token consumption, latency, tool calls, and so on, revealing token optimization opportunities.

For AI applications with high volumes of users and heavy usage, this granular insight is critical. It lets you see exactly where tokens are being spent across each step of a workflow. For example, you could identify token-intensive tasks where you could explore alternative models or identify tasks that may benefit from a specialized agent. Monitoring can also help flag and fix both cost and performance issues, since traces can be used to diagnose the root causes directly.

It can reveal opportunities to run workflows on owned infrastructure.

Inference costs scale with token consumption. After a certain threshold, the cost of paying a model provider starts to exceed the cost of running models on owned infrastructure. This tipping point will be different for each product, but you won’t know what it is without closely monitoring token use. Monitoring can tell us which workloads can potentially be run on local, open-source models. Insight into the task-level token consumption can also help us understand the infrastructure requirements for making this switch.

Operating AI systems entirely on owned infrastructure may not be an option for many people, since the cost can be substantial. However, it is possible to route certain workflows to your own infrastructure with a hybrid setup. While this sometimes involves considerable initial investment, it’s often a more cost-effective option long-term. Even if costs are not a major concern, we may want to run certain sensitive workflows locally. When we know the specific token consumption, we can make informed choices about how to do this.

Conclusion

Across industries, there’s been an intense focus on making AI solutions more powerful and useful. However, it’s becoming harder to ignore the cost of consistently delivering good results, especially at scale. AI systems can achieve these results in highly inefficient ways, increasing token spending, computational load, response times, and resource usage. Even if the system technically “works,” these problems can become an immense burden.

Excess token consumption is both a major cost and a performance issue. Token consumption will only grow as AI solutions become more capable because people will use them for more tasks and more complex workflows. Product builders who treat token efficiency as a core architectural concern will be able to balance cost and performance, creating a much more sustainable business model.

Thanks For Reading

If you haven’t already, please consider subscribing and sharing this newsletter with a friend. I hope you have a great week!

References

Priank’s Newsletter

arXiv | Stop Wasting Your Tokens: Towards Efficient Runtime Multi-Agent Systems

IBM

Level Up Coding | Stop Burning Tokens: A Developer’s Guide to Claude AI Token Optimization

LogRocket | Stop wasting money on AI: 10 ways to cut token usage

MindStudio | How to Reduce Token Usage in AI Agents: 10 MCP Optimization Techniques

Redis

VentureBeat | Cheaper tokens, bigger bills: The new math of AI infrastructure